October 8, 2021

The Ideal QA Process in Digital Advertising

Given the trends towards PPC automation, it’s no surprise that Google released Responsive Search Ads (RSAs). When these were released, it was easy for every PPC nerd to get excited. With RSAs, you have the ability to implement up to 15 headlines and 4 descriptions. The combinations Google’s AI can use to serve the right ad to the right user based on thousands of audience signals is nearly endless.

However, if you search Responsive Search Ads on Google, you’ll find countless blog posts showing how Responsive Search Ads underperform compared to Expanded Text Ads (ETAs) in CTR, CVR, and other ad copy evaluation metrics. We initially had similar experiences at Metric Theory, with our account managers seeing worse performance on RSAs and trying to figure out how to get the RSAs to outperform ETAs. However, as more testing and more information came out on the new ad types, it became clear that evaluating RSAs against ETAs in a 1-to-1 fashion was diminishing the true value RSAs could bring to advertisers.

Due to the nature of RSA automation and increased character count, RSAs are more eligible to serve in search ad auctions that ETAs would not be eligible for previously. Because of this, comparing RSAs against ETAs does not give RSAs a fair chance. In some cases, RSAs are going to show for entirely different queries than ETAs will. Instead of measuring RSA performance against ETAs, you should begin measuring incremental lift at the ad group level to fully understand the impact the RSA has on performance.

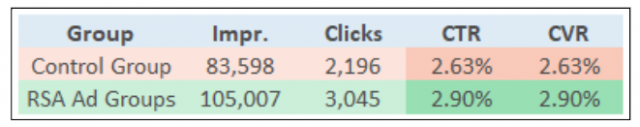

In one testing scenario, we took a campaign and split up the ad groups into two segments based on an even distribution of impression and click volume. In our control group, we continued running three ETAs in each ad group. In our test group, we added one RSA alongside the three ETAs in each ad group.

Comparing the ad groups with the RSA against the ad groups without the RSA, we saw a clear positive trend for the RSA ad groups in CTR and CVR:

Better yet, looking at the period over period analysis, we saw stronger increases in clicks and impression volume for the ad groups with the RSAs as well:

The control ad groups remained relatively flat in terms of impression and click volume over the period, which leads us to believe that the RSAs played a positive impact in growing overall impression and click volume for these ad groups.

It is worth noting in this case that, while we were not strictly evaluating ETAs vs. RSAs, we did see the RSAs out perform the ETAs when comparing primary ad copy evaluation metrics in this specific scenario:

However, if we strictly evaluated RSAs against ETAs, we would have missed out on the valuable insight that the RSAs drove incremental lifts in impressions within ad groups.

This is just one example on how to evaluate your Responsive Search Ad performance. In this scenario, we saw a healthy lift in impression and click volume in ad groups running RSAs. Even if this methodology of testing Responsive Search Ads doesn’t work out for you, we recommend that you continue testing these new ad formats. We know automation in PPC is not going away, so it’s crucial that you test and leverage these new tools for success.

If you’re looking for further guidance on testing in your account, contact our team.