June 9, 2022

To Stop the CMO Revolving Door, Bring Brand and Performance Together

At first, it’s music to your ears: you’re going to manage paid search for a B2B advertiser that has only ever optimized towards conversions and cost per conversion. The advertiser is not leveraging back-end data to optimize toward actual business goals! An epic scene (by PPC standards) unfolds in your head. The new director of marketing, sitting on a pile of leads but no insights on lead quality, is the damsel in distress. You, the PPC Manager armed with insights on how to optimize down to the keyword level for Sales Qualified Leads (SQLs), are the hero coming to the rescue.

Unfortunately, life doesn’t often unfold like a movie script. Much more likely, in the scenario above, you would face messy data, and couldn’t start optimizing toward SQLs or closed deals until you set up proper tracking and gathered enough data to make statistically-valid decisions. Here are the steps you should follow to start optimizing based on back-end data as soon as possible.

Paid marketing optimizations rarely resemble a fairy tale. Image via Flickr

Before launching into any sort of strategy to optimize beyond front-end conversions, you must take stock of your inventory.

Before you move forward with developing an optimization strategy, you need to understand what’s possible and what’s not. Understanding what you have and what you need will allow you to build both an immediate strategy forward and a road map for what you should track in the long term.

Don’t rush to start optimizing toward back-end lead data that you know is flawed – instead take the time to begin tracking and collecting data that will allow for effective optimizations in the future.

If you know you want to see disqualified vs. accepted leads and SQLs down to the keyword level, you need to implement tracking parameters on your ads (or keywords), and hidden fields on your LPs to do so. It could take months to aggregate enough data to make confident decisions, so don’t waste a day getting it in place.

At Metric Theory we have an expression, “control the controllables,” which means that you should not focus on areas where you cannot affect change. Similarly, if your data is not perfect, that doesn’t mean you can’t find opportunities to make optimizations.

If you only have 6 months of SQL data at the channel level, you’re not in an ideal spot, but that’s not in your immediate control. Work backwards from your ultimate goal to figure out what you can optimize toward. While disqualified vs. accepted leads is a far cry from full SQL data, it is still one step closer to higher quality leads. Leads are typically disqualified if they’re missing information, come from a bogus email address, etc. If your marketing automation platform can capture campaign-level disqualified lead data, you should use that.

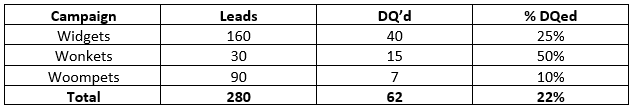

In the scenario above, your Wonkets campaign is bringing in a much higher percentage of junk leads. Since you don’t know lead to SQL rates at the campaign level, you can at least focus on accepted leads instead of front-end conversions. Going off your 22% account-wide average, you could use each campaign’s percentage disqualified leads as a modifier on your target CPA.

If the account-wide CPA is $100 and you have a campaign that has a 44% disqualified rate, your target CPA for that campaign should be (.22/.44)*$100 = $50. You should aim for a $50 CPA, since conversions from that campaign are half as likely to be accepted leads as the account average.

You can apply this thought process to any scenario where you have data at some lead status level (but not at your goal level). I have seen clients who have leads that go through an alphabet soup worth of statuses: DQed, A1, A2, B1, B2, B3, Opportunity & Won. This client wanted me to optimize toward Opportunities, but I only had a handful of Opportunities, and the CRM system dropped the campaign/ad group/keyword tag once a lead reached Opportunity status anyway. A1-B3 represented different levels of leads, but anything B1 and above was considered a good lead. Perfect! I just worked off of B1 leads. Using the method above, and looking at percent of B1 leads by campaign, I adjusted CPA targets based on B1 performance.

Finally, if you don’t have any data on lead statuses at all, you can try to evaluate quality based on what the lead entered into the fields on the lead form. Typically, there are fields for company size, industry, title, etc. If there are categories that correlate with stronger performance for your product or industry, you can optimize toward those. Just be careful, because paid search keyword and campaign themes don’t always correlate with the top-performing overall demographics for your company or product. For example, you may prefer leads from companies of 500+, but you shouldn’t discount your widgets campaign just because it skews toward smaller companies, as it might still deliver very high lead quality and close rates, which you won’t see until we get accurate data.

Even if your back-end data isn’t fairy tale material, pretend you’re in an action movie and thrive in the chaos. Assess the data you do have, right your ship moving forward & work with what you’ve got and you might still get to ride off into the sunset.